Significance testing is used within crosstab reporting and bar charts on Methodify to identify which data points are significantly higher or lower compared to each other.

Significance testing is on by default at the 95% confidence level for all charts where stimuli (ideas, concepts, offers, etc.) are being compared, so that you can be confident identifying how well they are performing relative to each other on each question. It is also on by default for all crosstabs which allows you to easily see differences within subgroups (e.g. males versus females).

Significance testing is not on by default for other types of surveys or demographic charts because it isn’t always applicable, but you can still turn it on (or off) on an individual question basis by clicking the settings cog to the right of each chart. Within the settings you can select to see your data at the 90% confidence level as well.

Whatever setting is selected for each question on the platform is what will be applied to your downloadable report.

What is Significance testing?

In technical terms, a significance test is used to determine whether to accept or reject the null hypothesis. In layman’s terms, it’s the likelihood that the result did not occur randomly and is likely attributable to a specific cause. That means, if a result is shown to be significantly higher or lower, we believe the difference is larger than can be explained by chance.

How Methodify Uses Significance Testing

For purposes of analyzing data we use the Test Statistic Z (z-test). The z-test is used to calculate a value and then is compared to a value determined by the Confidence Level selected and if greater than this value (or less in some cases), the result is usually deemed significant.

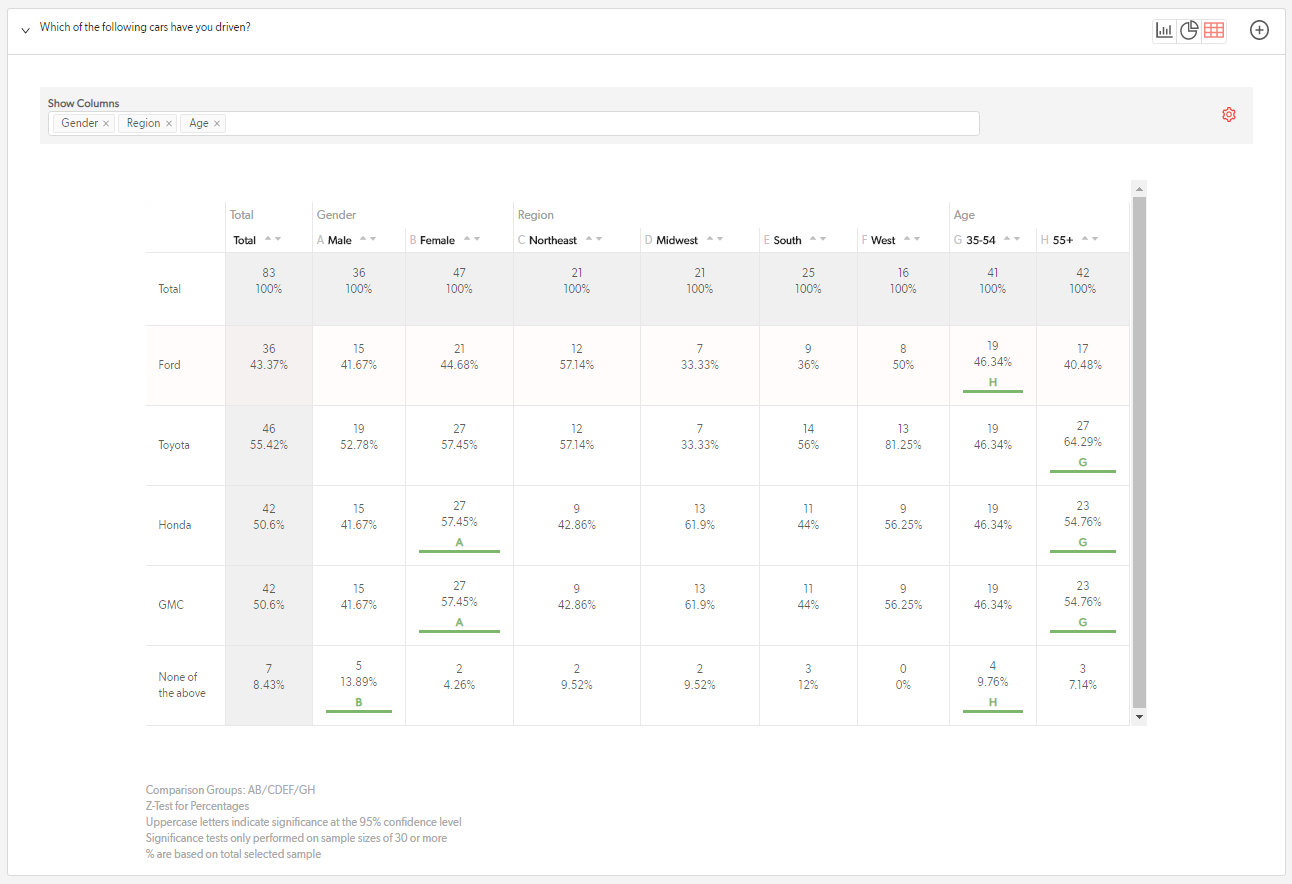

Within the crosstab table and bar chart, each variable category is compared to see if it is significantly higher or lower than the other categories. If yes, an uppercase letter is used to signal that it is significantly higher than another category.

Comments

0 comments

Please sign in to leave a comment.